The Type System Thesis¶

Canvas engineering is a type system for latent dynamics. This page explains the analogy and the open research questions.

New: Canvas Types + Program Layer

The Canvas Types module makes this concrete — declare Python types, compile to canvas schemas. The Program Layer extends it with typed process semantics: region families, carriers, scheduling, and compilation. See the runnable examples for end-to-end demonstrations.

The analogy¶

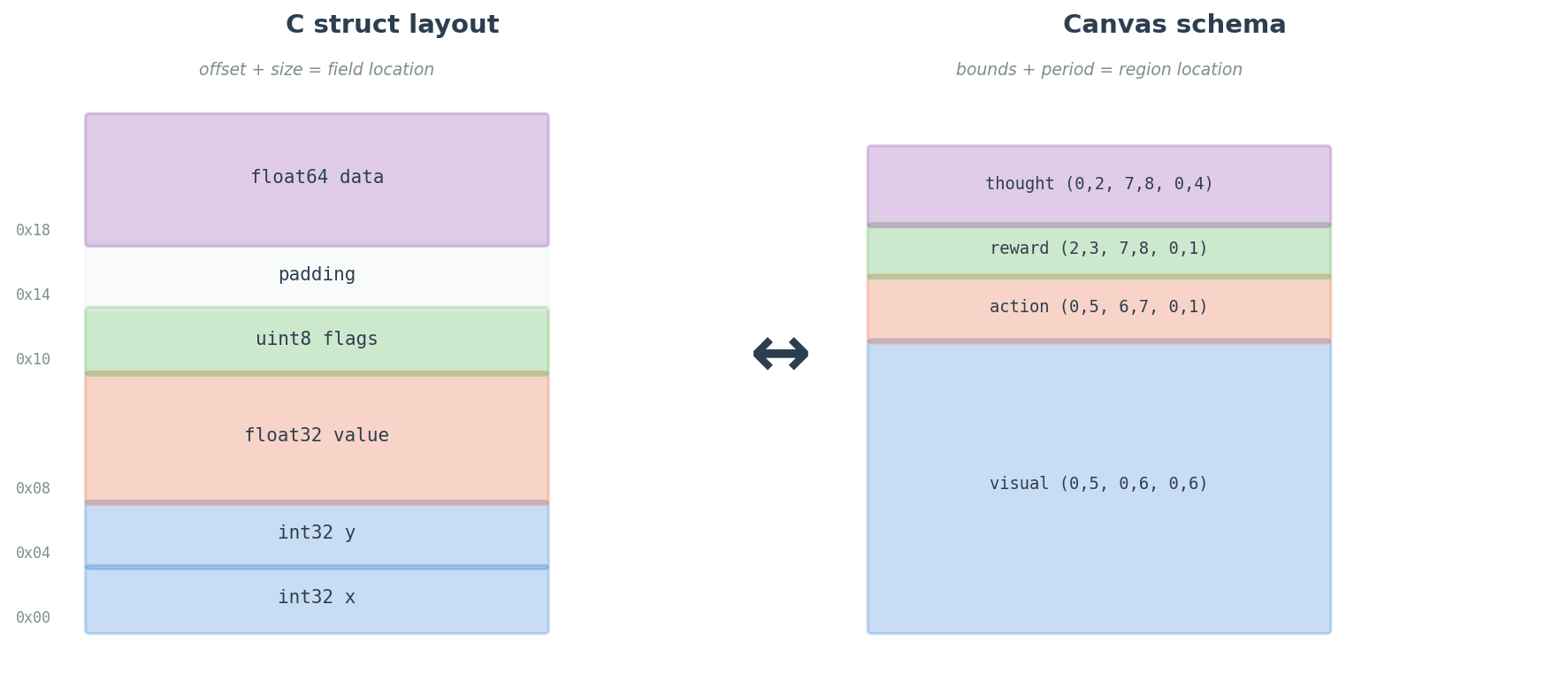

| Type system concept | Canvas equivalent | Implementation |

|---|---|---|

| Struct field (offset + size) | RegionSpec bounds | region_indices() |

| Field type annotation | period, loss_weight, semantic_type, default_attn | RegionSpec fields |

| Pointer / reference | Connection | CanvasTopology |

| Function signature | Topology pattern + fn type | attention_ops() |

| Memory layout | Flattened tensor positions | num_positions |

| ABI compatibility | Schema compatibility | compatible_regions() |

| Type-directed codegen | Loss mask from declarations | loss_weight_mask() |

| Type coercion cost | Transfer distance | transfer_distance() |

This isn't metaphor. region_indices() is literally a memory offset calculation. loss_weight_mask() is type-directed codegen for the loss function. The topology is a calling convention between regions.

What the schema declares¶

A CanvasSchema = layout + topology = complete type signature:

- Geometry — Where each modality lives in the (T, H, W) grid

- Frequency — How fast each region updates (period)

- Loss participation — Which regions are outputs, with what weight

- Connectivity — Who attends to whom, with what temporal constraints

- Function types — What kind of attention each connection uses

- Semantic types — What each modality means, as a machine-comparable embedding

Two agents with the same schema can share latent state directly. Two agents with different schemas can estimate compatibility via transfer_distance().

The open question: representation stability¶

Everything above assumes the platonic representation hypothesis applies: that declared canvas structure produces predictable, stable latent geometry. This is plausible but unproven.

If canvas schemas produce stable representations: - Plug-and-play modality: Retrain only encoder/decoder surface layers - Cross-model interop: Two models on the same schema can read each other's latents - Compositional agents: Swap policy canvas while keeping perception frozen

Experiments that would test this (31+)¶

- Seed stability: Train identical schemas with different seeds. CKA between corresponding regions.

- Topology → specialization: Different topologies, same data. Does connectivity predict specialization?

- Cross-model grafting: Freeze trained perception, graft fresh policy. Transfer cost?

- Embedding distance calibration: Train N modality pairs. Plot actual transfer cost vs. semantic embedding distance.