canvas-engineering¶

Prompt engineering, but for latent space.¶

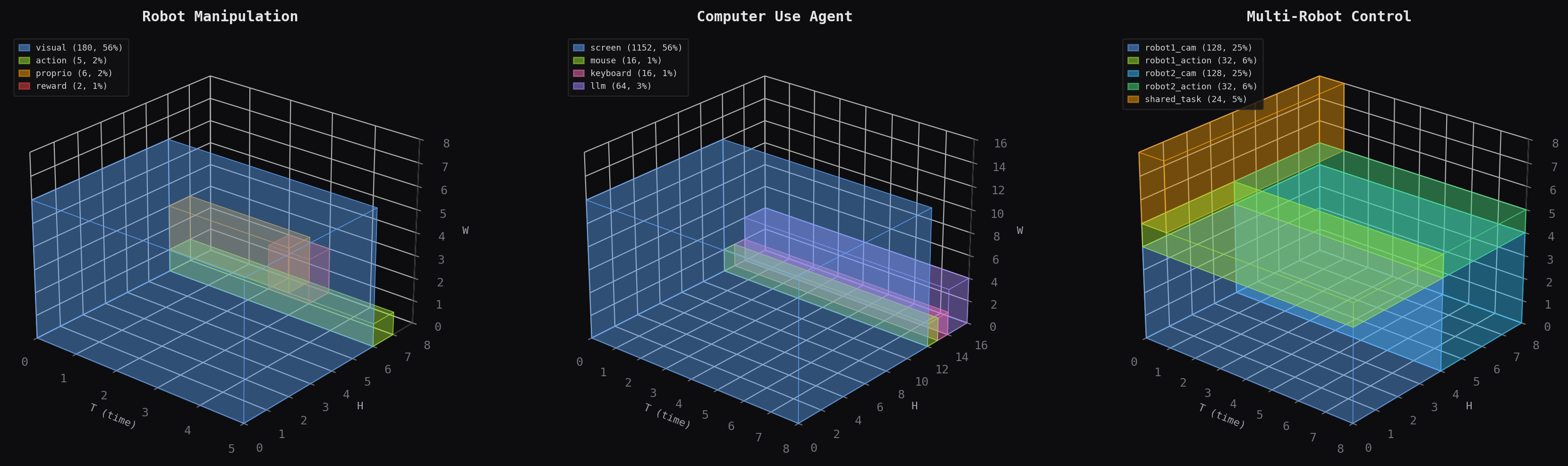

canvas-engineering gives video diffusion models structured latent space. You declare which regions carry video, actions, proprioception, reward, or thought — their geometry, temporal frequency, connectivity, attention function types, and loss participation — and the canvas compiles that declaration into attention masks, loss weights, and frame mappings.

The layout is the schema. The topology is the compute graph. Together they form a type system for multimodal latent computation.

Two orthogonal ideas¶

1. The canvas — structured multimodal latent space¶

Large video diffusion models generate video. The spatiotemporal canvas extends them to predict actions, estimate rewards, and process proprioception by placing heterogeneous modalities on a shared 3D grid. You design the schema, the model attends over everything.

2. Looped attention — weight-sharing regularization¶

Looped attention iterates transformer blocks with learned iteration embeddings. Result: 1.73x parameter efficiency (p<0.001). A frozen CogVideoX-2B backbone + 350K trainable loop parameters outperforms 11.5M unfrozen parameters. 3 loops is optimal.

Key features¶

Field+compile_schema()— Declare Python types whose fields are latent regions, compile to canvas schemas with auto-wired connectivityCanvasProgram— Typed process layer with families, carriers, clocks, and compile modesRegionSpec— Declare geometry, temporal frequency, loss weight, semantic type, and default attention function per regionCanvasTopology— Directed graph of attention operations with temporal constraints and per-edge function typesCanvasSchema— Portable JSON-serializable bundle (layout + topology + metadata)transfer_distance()— Cosine distance between semantic type embeddings estimates adapter cost- 18 attention function types — From standard cross-attention to Mamba, Perceiver, RWKV, CogVideoX-native, and more

- Temporal fill modes — DROP, HOLD, INTERPOLATE with higher-order IDW for cross-frequency attention

graft_looped_blocks()— One-line grafting onto CogVideoX with 350K trainable params

Install¶

Runnable examples¶

All examples train real models and generate visualizations. No GPU required.

| Example | What it shows |

|---|---|

| Hello Canvas Types | Declare, compile, train, visualize in 30 lines |

| Multi-Frequency Fusion | Bandwidth-proportional allocation vs flat baseline |

| CartPole Control | Real gym env, self-consistency loss, plan field PCA |

Next steps¶

- Quick Start — 30-line graft-and-train

- Canvas Types — The compositional type system

- The Canvas — How regions, frequency, and loss weighting work

- Attention Functions — The full lineup of 18 function types

- Program Layer — Typed process semantics for regions

- Design Recipes — Real-world schema patterns