brain-model

A set of experiments using Meta TRIBE v2 to model emotion, UX, virtual EEG, consciousness dynamics, and affective intervention from predicted brain activity.

brain-model is less a single application than a research notebook organized around one new capability: using Meta’s TRIBE v2 as a way to simulate brain activity from described stimuli, then asking what that enables.

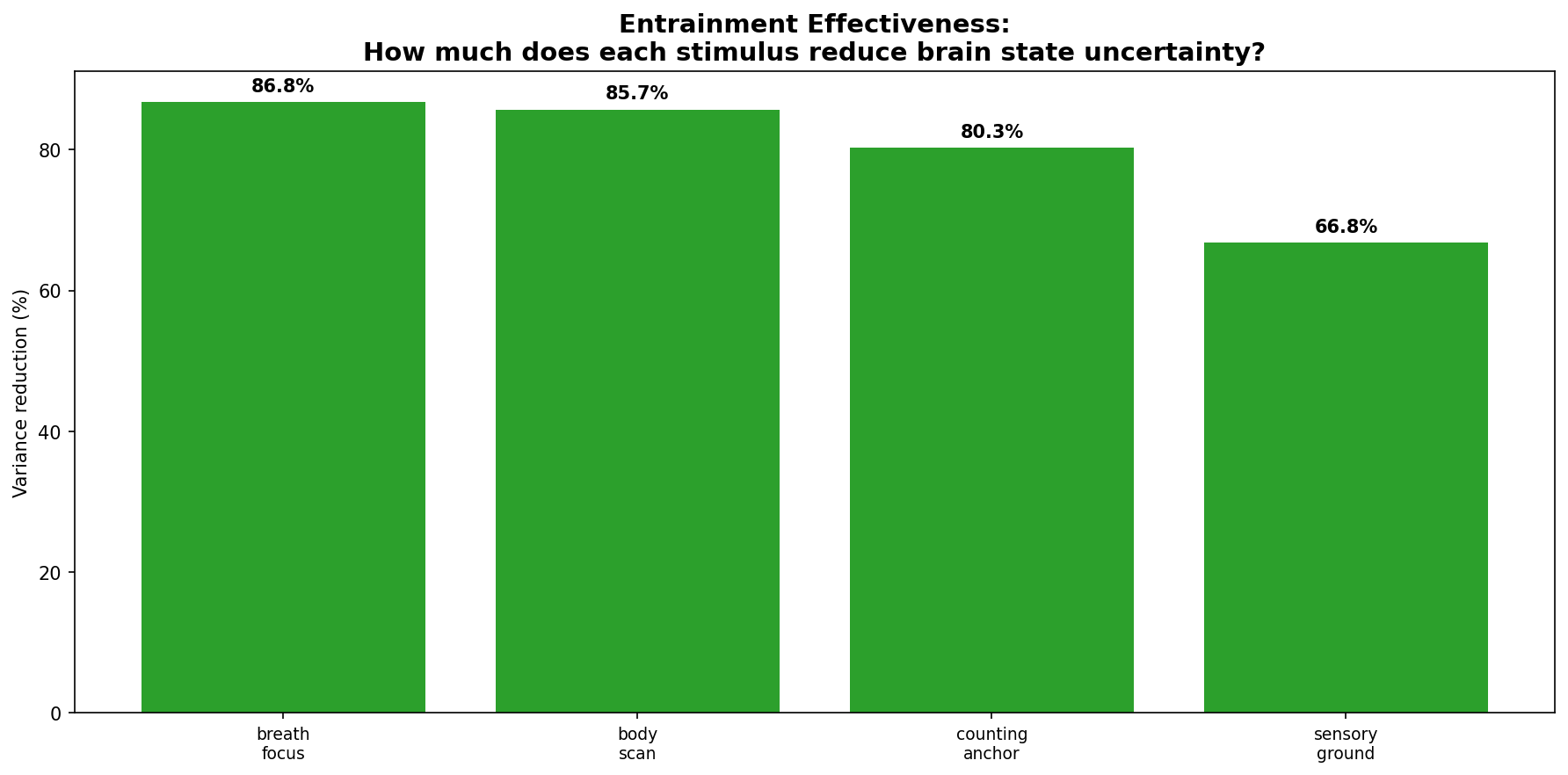

The repo breaks that question into a set of concrete experiments. There is work on emotional stimuli, virtual EEG, consciousness dynamics, affective intervention, and especially brain-informed UX. The strongest throughline is that a brain model becomes a new measurement instrument: not a substitute for neuroscience, but a way to cheaply prototype hypotheses that would otherwise require much heavier lab infrastructure.

The brain UX material is the part that feels most immediately product-facing. It defines a set of HIX signals, including cognitive load, frustration, engagement, reward, confusion, flow, trust, and spatial clarity, then asks what different interface patterns do to those signals. The result is a design framing I find compelling: good and bad UX are not just aesthetic categories, they are different ways of spending or respecting cognition.

The accompanying deck and video outline sharpen that thesis. The point is not merely “brain optimization for interfaces.” It is that some interfaces are metabolically violent to cognition, and that brain-derived signals could become reward functions for autonomous design systems, provided they are constrained by trust and not just optimized for reward or engagement.

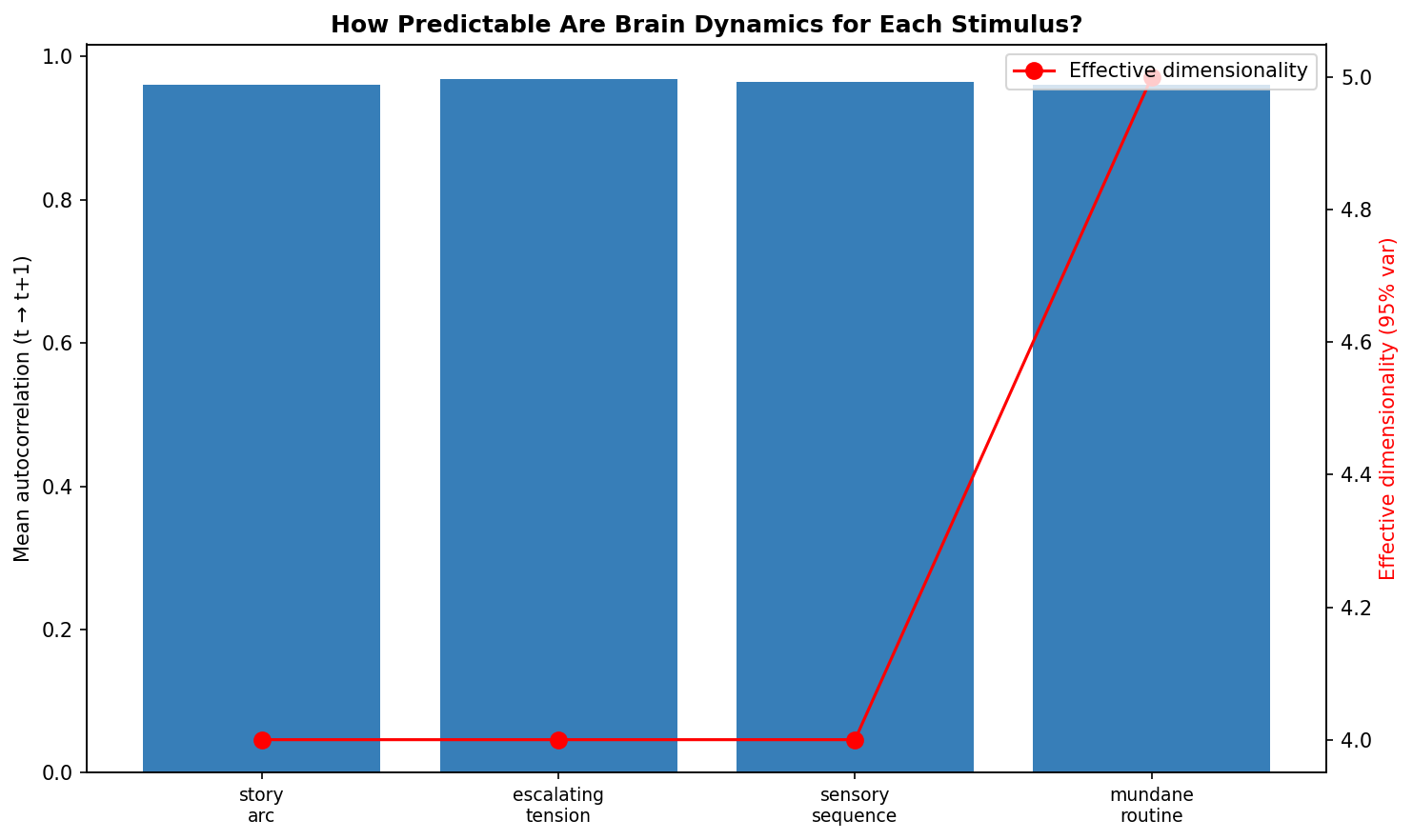

I also like that the repo does not stop at UX. The virtual EEG and consciousness experiments push toward a broader question: if high-dimensional brain activity evolves on low-dimensional trajectories, then perhaps some of the right abstractions for intelligence and experience are dynamical before they are symbolic.

Repo: JacobFV/brain-model